Google introduces AI updates to search engine

Google has unveiled two updates, ‘Circle to Search’ and ‘Multisearch’, aimed at significantly enhancing the search experience on its renowned search engine.

Ms. Elizabeth Reid, Google’s Vice President of Search, stated that the two updates, resulting from testing in generative Artificial Intelligence (AI), are designed to enhance the intuitiveness of the search experience.

Reid emphasized that Google’s mission is to organize the world’s information, ensuring its universal accessibility and usefulness.

Today, we’re introducing two updates to help you search any way, anywhere you want:

1️⃣ Circle to Search, a new way to search on Android

2️⃣ Gen AI-powered multisearch, so you can ask more complex questions about what you see ↓https://t.co/Il48xd4UJX— Google (@Google) January 17, 2024

She also said that this had gone hand in hand with Google ongoing advancements in AI, which helped to better understand information in many forms, whether text, audio, images or videos.

She noted that as part of this evolution, Google has streamlined the process of expressing search queries in a more natural and intuitive manner.

‘’For instance, you can search with your voice, or you can search with your camera using Lens. And recently, Google has been testing how generative AI’s ability to understand natural language makes it possible to ask questions on Search in a more natural way.

‘’Ultimately, we envision a future where you can search any way, anywhere you want, as we enter 2024, Google is introducing two major updates that bring this vision closer to reality,’’ she said.

‘Circle to Search’

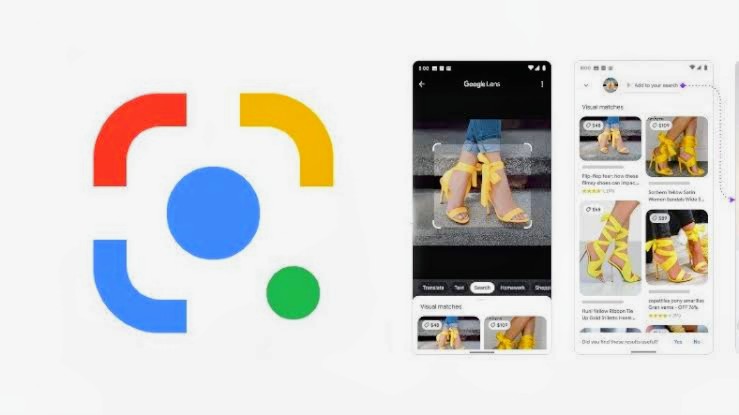

Reid highlighted the introduction of “Circle to Search” as an innovative feature, offering users a novel method to seamlessly search content on their Android phone screens without the need to switch between applications.

Also Read: Google lays off hundreds across multiple teams

She also explained that users can effortlessly choose images, text, or videos using simple and natural gestures such as circling, highlighting, scribbling, or tapping, providing a more intuitive way to access the desired information.

The vice-president revealed effective immediately, users can leverage the power of AI and visual recognition technology through the Google app. By simply pointing a camera at an object, uploading a photo, or sharing a screenshot, users can now pose questions and receive search results that transcend mere visual matches.

She emphasized that this capability empowers users to pose more intricate questions based on visual input, facilitating swift access to and comprehension of crucial information.

This feature harnesses the capabilities of artificial intelligence to provide users with in-depth insights and information. Instead of solely relying on visual similarities, Multisearch employs AI-powered algorithms to analyze images and deliver comprehensive search results.

This implies that users can access in-depth information, context, and related content specifically related to the objects or scenes captured in their photos or screenshots.